I know Nvidia's Shadowplay and Shield APIs use GPU encoding producing H.264 streaming video with very little overhead with minimal lag time. On the bright side, at higher bit-rates you can get a faster response time out of GPU encoding.

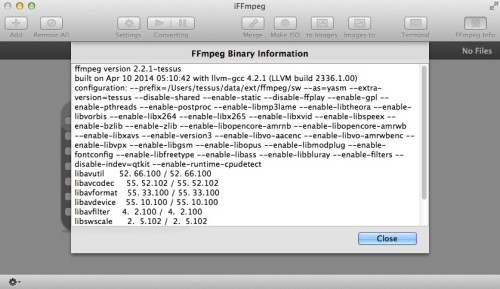

I've been hoping technology would improve to fix this but I haven't seen it yet. I was disappointed but resigned to use CPU only, even though it took longer I only need to encode that video once. But when trying to nail down the tightest bit-rate I noticed at lower bit-rates CPU was always waaaaay better looking than GPU. I am posting these here in case it will be useful to someone else.Ī few years ago I toyed around with this quite a lot myself using MediaCoder running a whole lot of test renders at varying bit-rates. I always wondered about what these numbers looked like but could never find anything. And yet, it can outperform the CPU so easily. I am guessing that the CPU can still be considered top-of-the-line, whereas the GPU is decent but nowhere near the best. Settings: downscale to 720p, set video bitrate to 3Mbps Settings: keep original resolution, set video bitrate to 3Mbps Here are the numbers from two different scenarios:įile 1 Input: 5.46GiB, 5728Kbps, 720p, 1h 48m For doing this, I had to compile ffmpeg with nvenc support.

I recently got a new GTX 970 GPU, and I decided to give GPU decoding a try. I have an i7-4790K 4.00GHz that I use for transcoding new videos using the mp4_automator tool, for maximum Plex client compatibility.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

June 2023

Categories |

RSS Feed

RSS Feed